In 2016, I hired a developer to answer a question that still haunts maternal health: can we use structured hospital data to detect patterns tied to severe pregnancy complications, early enough to support prevention?

The developer didn’t finish the work. He left the project unfinished. But what he did leave behind matters because it documents a concrete effort: an early, real-world attempt to test whether large-scale discharge data could support pattern detection for preeclampsia and postpartum hemorrhage.

This assertion is not a claim that I built a clinical system. It is a transparent look at what maternal health AI feasibility work actually looked like in 2016, what data was used, what methods were tested, what was learned, and what it taught me about why prevention requires more than algorithms.

________________________________________________________________________________

The Question I Was Asking

By 2016, I had spent years as a board-certified OB/GYN and a federal subject-matter expert reviewing maternal mortality cases. I kept seeing the same pattern: preventable complications and deaths where warning signs were present in the data but weren’t acted on.

The question wasn’t abstract: **Could structured diagnosis codes from national hospital discharge data reveal patterns that might support earlier recognition of high-risk complications?**

To find out, I commissioned a feasibility analysis focused on two conditions with serious consequences:

- **Pre-eclampsia**

- **Postpartum hemorrhage**

________________________________________________________________________________

The Data and Methods (What the Report Documents)

Dataset

The analysis used the **2016 HCUP Nationwide Readmission Database (NRD)**, which contained 17,197,683 discharge records with a Patient Linkage Number, enabling linkage of multiple hospital stays to individual patients.

From this dataset, the developer used:

- 35 ICD-10-CM diagnosis fields

- Gender code

- Individual linkage number

Filtering for Pregnancy-Related Encounters

To define the population, he filtered records using **ICD-10 “O” codes** (pregnancy, childbirth, and the puerperium).

**Result:** 2,157,210 pregnancy-related discharge records representing 2,001,886 unique individuals (approximately 12.54% of the original dataset).

This step matters. In clinical informatics, you can’t build prevention tools without first defining: **Who is in the population you’re studying?**

________________________________________________________________________________

What the Descriptive Analysis Found

Overall Readmission Pattern

The report documented that approximately **93.7% of individuals had a single hospital admission** in the available records.

Caption: Most pregnant individuals (93.7%) had a single hospital admission in the 2016 NRD dataset, but complications changed that pattern.

Frequency of Complications (Within This Dataset)

Within the pregnancy-related population:

- **Pre-eclampsia: ~4.51%** (90,374 individuals)

- **Postpartum hemorrhage: ~3.38%** (67,610 individuals)

Readmission Patterns in Complication Groups

The report then compared single-admission rates for each complication group:

**Preeclampsia:** ~84.9% had a single admission

- Interpretation: Individuals with preeclampsia were **9.4% more likely to have multiple hospital stays** compared to the overall pregnancy cohort

**Postpartum hemorrhage:** ~89.9% had a single admission

- Interpretation: Individuals with PPH were **4.1% more likely to have multiple hospital stays** compared to the overall pregnancy cohort

These are descriptive observations within the dataset, not claims of causality. Still, they reflect a patient safety lens: complications often accompany greater healthcare utilization and potentially more points at which care can fail.

________________________________________________________________________________

The Pattern Exploration Work

Diagnosis Code Co-Occurrence Analysis

The report explored how often specific diagnosis codes occurred in individuals with preeclampsia versus those without, and similarly for postpartum hemorrhage.

The developer described this as calculating a “percent decrease” metric and ordering results to suggest “relevancy from a machine learning standpoint.”

**Examples from the report:**

- For preeclampsia: **O76 code** (delivery complications related to abnormal fetal heart rate) was “~40% more likely” to occur with preeclampsia

- The report also stated individuals with preeclampsia were “~120% more likely to have preterm labor”

- For postpartum hemorrhage: **O14 code** (pre-eclampsia) was “~123% more likely” to occur with PPH

Caption: Pattern exploration example: certain diagnosis codes occurred significantly more often in the preeclampsia group, these became features for ML modeling.

This pattern work serves as a bridge between clinical reality and machine learning features: **understanding which signals tend to travel together** before building predictive models.

________________________________________________________________________________

The Machine Learning Prototypes

Preeclampsia Model (TensorFlow)

The report states a machine learning model was created using **Google TensorFlow** that:

- Takes a list of 1s and 0s representing whether each diagnosis code exists in an individual’s records

- Outputs a prediction of whether the person will also have a diagnosis of preeclampsia

**Feature representation:** A **1,639-digit binary vector** (one position for each unique ICD-10 code in the population)

**Dataset balancing:** The populations differed greatly in size (90,374 with preeclampsia vs. 1,911,509 without), so the developer subsampled both to create balanced sets of 90,000 each.

**Reported performance:**

- **~70% highest accuracy**

- **~68% average accuracy**

**Feature reduction test:** When the feature set was reduced from 1,639 codes to 40 codes (selected based on the relevancy analysis), results were “slightly worse”—roughly 65% average and 68% high.

Postpartum Hemorrhage Model (TensorFlow)

Similarly, the report documents a TensorFlow model for postpartum hemorrhage using the same approach:

**Dataset balancing:** Subsampled from 67,610 with PPH vs. 1,934,273 without to 65,000 vs. 65,000

**Reported performance:**

- **~70% highest accuracy**

- **~67% average accuracy**

**Feature reduction:** Same pattern, reducing to 40 codes produced slightly worse results.

Caption: TensorFlow model training output showing documented accuracy results in the high-60s to ~70% range using 1,639 diagnosis-code features.

________________________________________________________________________________

What This Report Shows, and What It Doesn’t

What It Documents

✓ A structured-data feasibility approach using a national readmissions dataset

✓ Reproducible pregnancy cohort filtering (ICD-10 O codes)

✓ Descriptive rates for preeclampsia and PPH in that 2016 dataset

✓ Pattern exploration comparing diagnosis-code occurrence between groups

✓ Early TensorFlow prototypes with reported accuracy in the high-60s to ~70% under balanced subsampling

What It Does Not Document

✗ Clinical deployment or prospective validation

✗ Sensitivity/specificity/AUC or other clinical validation metrics

✗ Real-time early warning operating in a hospital workflow

✗ Integration with EHR systems

**Stating those boundaries is not a weakness; it’s a sign of credibility.**

________________________________________________________________________________

What This Feasibility Work Taught Me

1. You Can’t Build Prevention Tools Without Defining the Population First

The report begins by isolating pregnancy-related encounters using ICD-10 “O” codes. That’s the clinical informatics discipline: **define your denominator before talking about risk.**

2. Descriptive Analysis Reveals Where Complexity Clusters

Even basic statistics showed that individuals with preeclampsia and postpartum hemorrhage had higher rates of multiple hospital stays. That matters for patient safety: **more touchpoints mean more opportunities for things to go wrong.**

3. Pattern Comparisons: Bridge Clinical Reality and ML Features

Understanding which diagnosis codes co-occur most often provides a starting point for feature engineering. It’s not just “throw everything into the model”it’s **understanding what signals travel together clinically.**

4. Early ML Prototypes Must State Limits Plainly

The report documents models with ~70% accuracy using diagnosis codes alone. That’s a feasibility signal, not a clinical-grade tool. **Honesty about what the data can and can’t support is part of patient safety.**

5. More Features Aren’t Always Better, But Context Matters

The 40-code reduced model performed slightly worse than the full 1,639-code model. That suggests **you need a comprehensive clinical context**, not just “the most important” codes.

6. Administrative Codes Are a Starting Point, Not the Endpoint

This work used ICD-10 diagnosis codes from discharge records. That’s structured and available at scale, but it’s **retrospective and limited**. Real early warning requires real-time vitals, labs, and clinical notes.

7. Prediction Alone Isn’t Prevention

The most important lesson: even if you could predict preeclampsia with high accuracy, **prediction doesn’t prevent harm if clinicians miss the warning signs due to cognitive bias.**

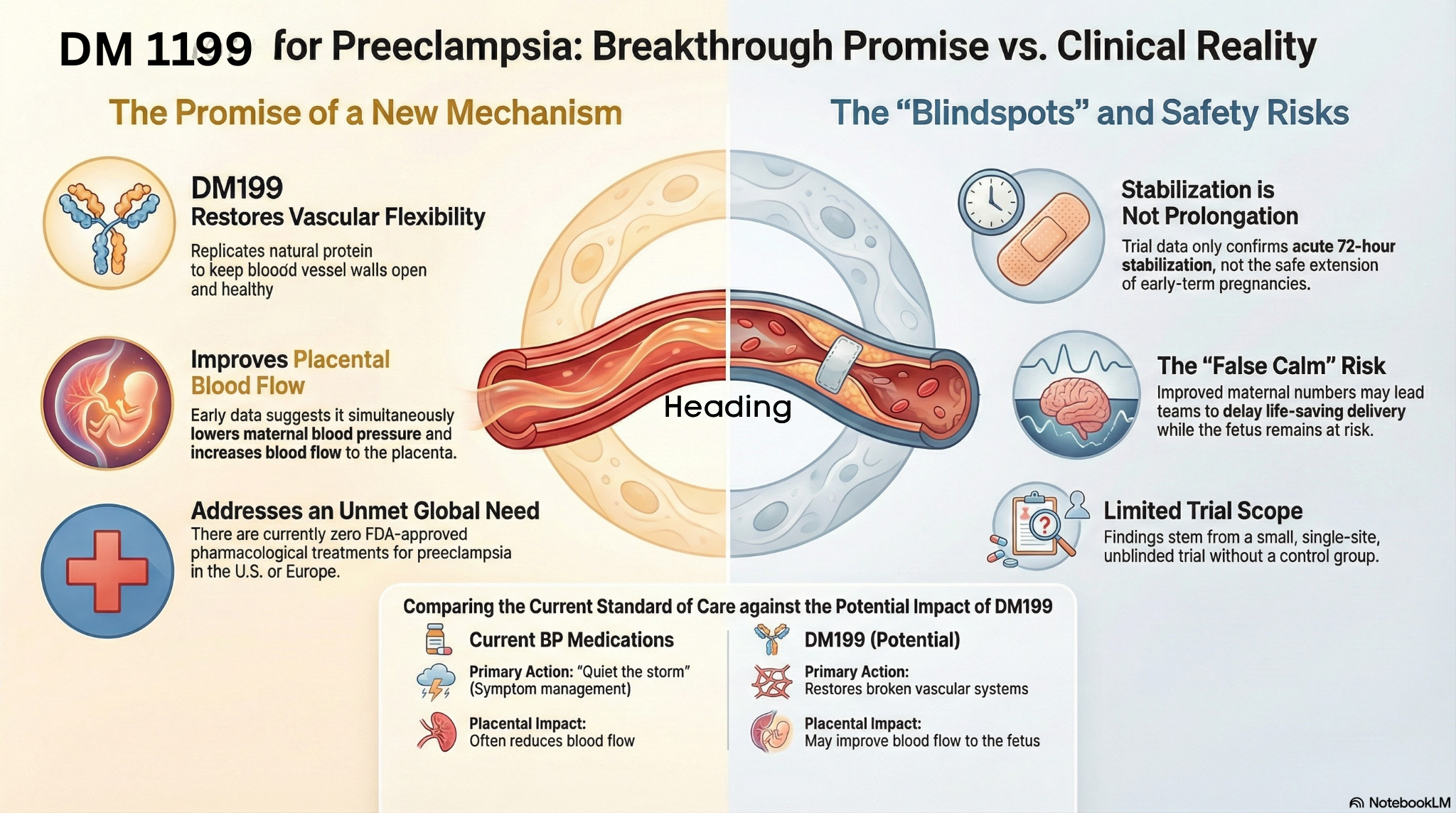

That realization led me to what came next: **Pregnancy Blindspots™**, a clinical decision support system focused on detecting the systematic cognitive failures that cause clinicians to miss diagnoses, not just predicting who’s at risk.

________________________________________________________________________________

Why This Work Still Matters

This report is from 2016. It’s an early artifact. But it documents the kind of foundational work that any credible maternal early-warning effort must respect:

- **Start with a defined population** (who is included, based on what signals)

- **Measure what’s present and how often** (descriptive reality before predictions)

- **Explore which signals travel together** (pattern work that informs features)

- **Prototype carefully and report performance honestly** (including the constraints of the data)

In maternal health, “early warning” is not a slogan. It’s a commitment to **disciplined signal detection in service of prevention**.

And prevention requires more than algorithms. It requires understanding **how and why clinical teams miss the signals that are already there.**

________________________________________________________________________________

Where This Goes Next

I’m currently building partnerships for a pilot study to test **Pregnancy Blindspots™**, a platform that detects systematic cognitive failures in preeclampsia diagnosis. It builds on what this feasibility work taught me: that the gap isn’t just about prediction, it’s about **recognizing when the signals are present. ****

**If you’re interested in:**

- Pilot studies for maternal early warning systems

- Collaboration on preeclampsia detection research

- Understanding how cognitive bias affects clinical decision-making in maternal health

I’m open to conversations.

**Follow my work:**

- Substack: https://substack.com/@drlinda017

- LinkedIn: https://www.linkedin.com/in/drlindagalloway/

- Website: https://perinatalsolutions.com/

________________________________________________________________________________

**For researchers and collaborators:** The full feasibility report referenced in this article is available for review in research and collaboration discussions. Contact me via my website.

________________________________________________________________________________

*Linda Burke-Galloway, MD, FACOG

Board-Certified OB/GYN | AI Certified Consult | Founder, Perinatal Solutions LLC

Clinical Informatics Training: Johns Hopkins | Health Futurist: Stanford*